Robbyant, an embodied AI company within Ant Group, today announced the open-source release of LingBot-VLA, a vision-language-action (VLA) model designed to serve as a “universal brain” for real-world robotics, which helps reduce post-training costs and accelerate the path to scalable deployment.

This press release features multimedia. View the full release here: https://www.businesswire.com/news/home/20260127455032/en/

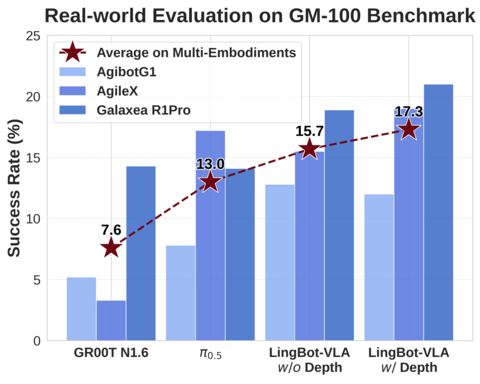

On the GM-100 real-robot benchmark, LingBot-VLA outperformed other models in cross-morphology generalization

So far, LingBot-VLA has been successfully adapted to robots from leading manufacturers, including Galaxea Dynamics and AgileX Robotics, demonstrating strong cross-morphology transfer capabilities across diverse robot platforms.

The model’s performance was evaluated on the GM-100 benchmark, a comprehensive evaluation suite open-sourced by Shanghai Jiao Tong University that comprises 100 real-world tasks. In tests conducted across three distinct physical robot platforms, LingBot-VLA achieved higher task success rates than other evaluated models. Notably, when depth information was included, the model’s spatial perception improved significantly, setting a new record on task success rate.

Additionally, on the RoboTwin 2.0 simulation benchmark, which features 50 challenging tasks under intense environmental randomization, including varying lighting, clutter, and height perturbations, LingBot-VLA leveraged its learnable query alignment mechanism to integrate depth cues effectively and achieved a higher task success rate in complex scenarios, demonstrating robust performance on both simulation and real-world deployment.

To date, the deployment of embodied AI has been hampered by cross-platform generalization challenges stemming from differences in robot morphology, task definitions and operating environments. Developers are often forced to repeatedly collect data, retrain models, and fine-tune parameters for each new deployment, leading to high costs, low reusability, and limited scalability.

To address these challenges, LingBot-VLA was pre-trained on over 20,000 hours of large-scale real-world interaction data, covering nine mainstream dual-arm robot configurations, including AgileX, Galaxea R1Pro, RILite, and AgiBot G1. This enables a single model, or a universal brain, to be deployed across a wide range of robotic morphologies, including single-arm, dual-arm, and humanoid platforms, while maintaining high success rates and robustness despite variations in tasks, environments, or hardware configurations.

Beyond generalization, LingBot-VLA also demonstrates strong data and computational efficiency. With comprehensive optimizations to its underlying codebase, LingBot-VLA achieves a 1.5x to 2.8x improvement in training speed compared with other frameworks such as StarVLA and OpenPI.

Notably, this open-source release includes not only the model weights but also a complete, production-ready codebase, featuring tools for data processing, efficient fine-tuning, and automated evaluation. This toolchain can help shorten training cycles and reduces both compute requirements and time cost to commercial deployment, allowing developers to rapidly adapt LingBot-VLA to their own robots and use cases with minimal overhead.

Zhu Xing, CEO of Robbyant, said: “For embodied intelligence to achieve large-scale adoption, we need highly capable and cost-effective foundation models that work reliably on real hardware. With LingBot-VLA, we aim to push the limits of reusable, verifiable, and scalable embodied AI for real-world deployment. Our goal is to accelerate the integration of AI into the physical world so it can serve everyone sooner.”

“LingBot-VLA is Ant Group’s first open-source embodied AI model and marks another milestone in our efforts toward Artificial General Intelligence (AGI),” Zhu added. “Ant Group is committed to advancing AGI through an open and collaborative approach. To this end, we’ve launched InclusionAI, a comprehensive technological ecosystem spanning foundational models, multimodal intelligence, reasoning, novel architectures, and embodied AI. The open-sourcing of LingBot-VLA is a key step in this initiative. We look forward to working with developers worldwide to accelerate the development and large-scale adoption of embodied intelligence and help advance progress toward AGI.”

The announcement was made as part of Robbyant’s “Evolution of Embodied AI Week” initiative. On January 27, Robbyant unveiled LingBot-Depth, a high-precision spatial perception model. When paired with LingBot-Depth, LingBot-VLA can leverage higher-quality depth representations, effectively upgrading the system’s “vision” and enabling robots to “see more clearly and act more intelligently”.

To learn more about LingBot-VLA, please visit:

- Code: https://github.com/Robbyant/lingbot-vla

- Tech Report: https://arxiv.org/abs/2601.18692

- Hugging Face: https://huggingface.co/collections/robbyant/lingbot-vla

About Robbyant

Robbyant is an embodied intelligence company within Ant Group, dedicated to advancing embodied intelligence through cutting-edge software and hardware technologies. Robbyant independently develops foundational large models for embodied AI and actively explores next-generation intelligent devices, aiming to create robotic companions and caregivers that truly understand and enhance people’s everyday lives and deliver reliable intelligent services across key use cases, such as elderly care, medical assistance, and household tasks.

To learn more about Robbyant, please visit: www.robbyant.com

View source version on businesswire.com: https://www.businesswire.com/news/home/20260127455032/en/

Contacts

Media Inquiries

Vick Li Wei

Ant Group

vick.lw@antgroup.com