OpenAI officially launched Sora 2 on September 30, 2025, with public access commencing on October 1, 2025. This highly anticipated release, which is a past event as of October 5, 2025, marks a monumental leap in the field of generative artificial intelligence, particularly in the creation of realistic video and synchronized audio. Hailed by OpenAI as the "GPT-3.5 moment for video," Sora 2 is poised to fundamentally reshape the landscape of content creation, offering unprecedented capabilities that promise to democratize high-quality video production and intensify the ongoing AI arms race.

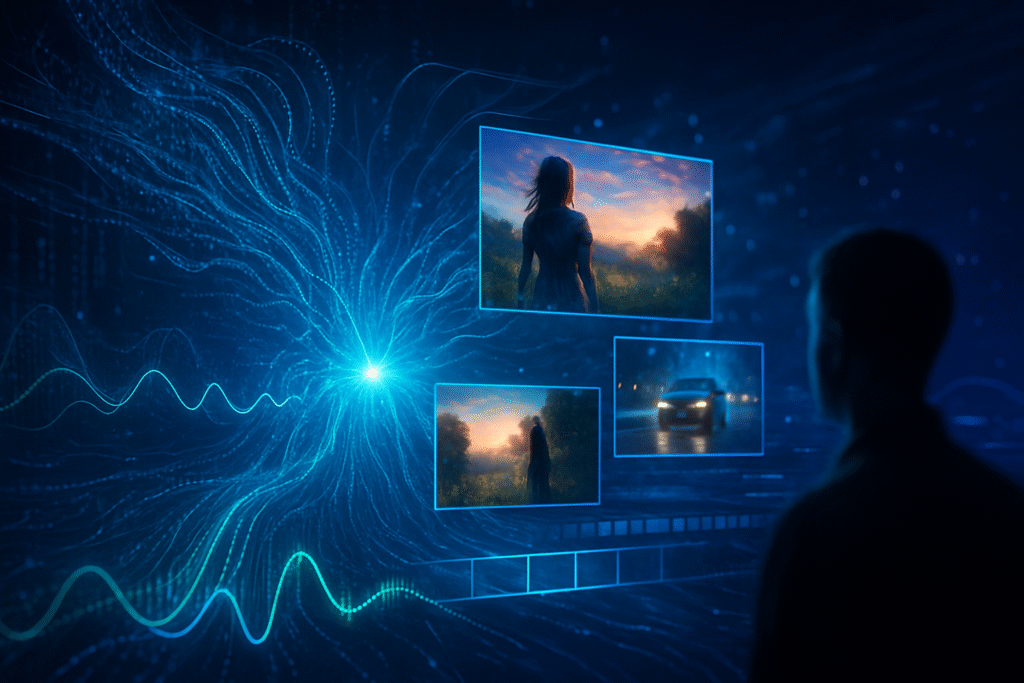

The immediate significance of Sora 2 cannot be overstated. By dramatically lowering the technical and resource barriers to video production, it empowers a new generation of content creators, from independent filmmakers to marketers, to generate professional-grade visual narratives with ease. This innovation not only sets a new benchmark for generative AI video but also signals OpenAI's strategic entry into the social media sphere with its dedicated iOS app, challenging established platforms and pushing the boundaries of AI-driven social interaction.

Unpacking the Technical Marvel: Sora 2's Advanced Capabilities

Sora 2 leverages a sophisticated diffusion transformer architecture, employing latent video diffusion processes with transformer-based denoisers and multimodal conditioning. This allows it to generate temporally coherent frames and seamlessly aligned audio, transforming static noise into detailed, realistic video through iterative noise removal. This approach is a significant architectural and training advance over the original Sora, which debuted in February 2024.

A cornerstone of Sora 2's technical prowess is its unprecedented realism and physical accuracy. Unlike previous AI video models that often struggled with motion realism, object permanence, and adherence to physical laws, Sora 2 produces strikingly lifelike outputs. It can model complex interactions with plausible dynamics, such as a basketball rebounding realistically or a person performing a backflip on a paddleboard, significantly minimizing the "uncanny valley" effect. The model now better understands and obeys the laws of physics, even if it means deviating from a prompt to maintain physical consistency.

A major differentiator is Sora 2's synchronized audio integration. It can automatically embed synchronized dialogue, realistic sound effects (SFX), and full ambient soundscapes directly into generated videos. This eliminates the need for separate audio generation and complex post-production alignment, streamlining creative workflows. While Sora 1 produced video-only output, Sora 2's native audio generation for clips up to 60 seconds is a critical new capability.

Furthermore, Sora 2 offers advanced user controllability and temporal consistency. It can generate continuous videos up to 90 seconds in length (up to 60 seconds with synchronized audio) at ultra-high 4K resolution. Users have finer control over camera movements, shot composition, and stylistic choices (cinematic, realistic, anime). The model can follow intricate, multi-shot instructions while maintaining consistency across the generated world, including character movements, lighting, and environmental elements. The new "Cameo" feature allows users to insert a realistic, verified likeness of themselves or others into AI-generated scenes based on a short, one-time video and audio recording, adding a layer of personalization and control.

Initial reactions from the AI research community and industry experts have been a mix of awe and concern. Many are impressed by the leap in realism, physical accuracy, and video length, likening it to a "GPT-4 moment" for AI video. However, significant concerns have been raised regarding the potential for "AI slop"—generic, low-value content—and the proliferation of deepfakes, non-consensual impersonation, and misinformation, especially given the enhanced realism. OpenAI has proactively integrated safety measures, including visible, moving watermarks and embedded Content Credentials (C2PA) metadata in all generated videos, alongside prompt filtering, output moderation, and strict consent requirements for the Cameo feature.

Competitive Ripples: Impact on AI Companies and Tech Giants

The launch of OpenAI (private) Sora 2 significantly intensifies the competitive landscape within the AI industry, pushing major tech giants and AI labs to accelerate their own generative video capabilities. Sora 2's advancements set a new benchmark, compelling rivals to strive for similar levels of sophistication in realism, physical accuracy, and audio integration.

Google (NASDAQ: GOOGL) is a prominent player in this space with its Veo model, now in its third iteration (Veo 3). Veo 3 offers native audio generation, high quality, and realism, and is integrated into Google Vids, an AI-powered video creator and editor available on Workspace plans. Google's strategy focuses on integrating AI video into its productivity suite and cloud services (Vertex AI), aiming for broad user accessibility and enterprise solutions. While Sora 2 emphasizes a standalone app experience, Google's focus on seamless integration with its vast ecosystem positions it as a strong competitor, particularly in business and education.

Meta (NASDAQ: META) has also made considerable strides, launching "Vibes," a dedicated feed for short-form, AI-generated videos integrated with Instagram and Facebook. Meta's approach is to embed AI video creation deeply within its social media platforms to boost engagement and offer new creative outlets. Their Movie Gen model also works on text-to-video, text-to-audio, and text-to-image. Sora 2's advanced capabilities could pressure Meta to further enhance the realism and control of its generative video offerings to maintain competitiveness in user-generated content and social media engagement.

Adobe (NASDAQ: ADBE), a long-standing leader in creative software, is expanding its AI strategy with new premium video generation capabilities under its Firefly AI platform. The Firefly Video Model, now in public beta, enables users to generate video clips from text prompts and enhance footage. Adobe's key differentiator is its focus on "commercially safe" and "IP-friendly" content, as Firefly is trained on properly licensed material, mitigating copyright concerns for professional users. Sora 2's impressive realism and control will challenge Adobe to continuously push the boundaries of its Firefly Video Model, especially in achieving photorealistic outputs and complex scene generation, while upholding its strong stance on commercial safety.

For startups, Sora 2 presents both immense opportunities and significant threats. Startups focused on digital marketing, social media content, and small-scale video production can leverage Sora 2 to produce high-quality videos affordably. Furthermore, companies building specialized tools or platforms on top of Sora 2's API (when released) can create niche solutions. Conversely, less advanced AI video generators may struggle to compete, and traditional stock footage libraries could see reduced demand as custom AI-generated content becomes more accessible. Certain basic video editing and animation services might also face disruption.

Wider Significance: Reshaping the AI Landscape and Beyond

Sora 2's emergence signifies a critical milestone in the broader AI landscape, reinforcing several key trends and extending the impact of generative AI into new frontiers. OpenAI explicitly positions Sora 2 as a "GPT-3.5 moment for video," indicating a transformation akin to the impact large language models had on text generation. It represents a significant leap from AI that understands and generates language to AI that can deeply understand and simulate the visual and physical world.

The model's ability to generate longer, coherent clips with narrative arcs and synchronized audio will democratize video production on an unprecedented scale. Independent filmmakers, marketers, educators, and even casual users can now produce professional-grade content without extensive equipment or specialized skills, fostering new forms of storytelling and creative expression. The dedicated Sora iOS app, with its TikTok-style feed and remix features, promotes collaborative AI creativity and new paradigms for social interaction centered on AI-generated media.

However, this transformative potential is accompanied by significant concerns. The heightened realism of Sora 2 videos amplifies the risk of misinformation and deepfakes. The ability to generate convincing, personalized content, especially with the "Cameo" feature, raises alarms about the potential for malicious use, non-consensual impersonation, and the erosion of trust in visual media. OpenAI has implemented safeguards like watermarks and C2PA metadata, but the battle against misuse will be ongoing. There are also considerable anxieties regarding job displacement within creative industries, with professionals fearing that AI automation could render their skills obsolete. Filmmaker Tyler Perry, for instance, has voiced strong concerns about the impact on employment. While some argue AI will augment human creativity, reshaping roles rather than replacing them, studies indicate a potential disruption of over 100,000 U.S. entertainment jobs by 2026 due to generative AI.

Sora 2 also underscores the accelerating trend towards multimodal AI development, capable of processing and generating content across text, image, audio, and video. This aligns with OpenAI's broader ambition of developing AI models that can deeply understand and accurately simulate the physical world in motion, a capability considered paramount for achieving Artificial General Intelligence (AGI). The powerful capabilities of Sora 2 amplify the urgent need for robust ethical frameworks, regulatory oversight, and transparency tools to ensure responsible development and deployment of AI technologies.

The Road Ahead: Future Developments and Predictions

The trajectory of Sora 2 and the broader AI video generation landscape is set for rapid evolution, promising both exciting applications and formidable challenges. In the near term, we can anticipate wider accessibility beyond the current invite-only iOS app, with an Android version and broader web access via sora.com. Crucially, an API release is expected, which will democratize access for developers and enable third-party tools to integrate Sora 2's capabilities, fostering a wider ecosystem of AI-powered video applications. OpenAI is also exploring new monetization models, including potential revenue-sharing for creators and usage-based pricing upon API release, with ChatGPT Pro subscribers already having access to an experimental "Sora 2 Pro" model.

Looking further ahead, long-term developments are predicted to include even longer, more complex, and hyper-realistic videos, overcoming current limitations in duration and maintaining narrative coherence. Future models are expected to improve emotional storytelling and human-like authenticity. AI video generation tools are likely to become deeply integrated with existing creative software and extend into new domains such as augmented reality (AR), virtual reality (VR), video games, and traditional entertainment for rapid prototyping, storyboarding, and direct content creation. Experts predict a shift towards hyper-individualized media, where AI creates and curates content specifically tailored to the user's tastes, potentially leading to a future where "unreal videos" become the centerpiece of social feeds.

Potential applications and use cases are vast, ranging from generating engaging short-form videos for social media and advertisements, to rapid prototyping and design visualization, creating customized educational content, and streamlining production in filmmaking and gaming. In healthcare and urban planning, AI video could visualize complex concepts for improved learning and treatment or aid in smart city development.

However, several challenges must be addressed. The primary concern remains the potential for misinformation and deepfakes, which could erode trust in visual evidence. Copyright and intellectual property issues, particularly concerning the use of copyrighted material in training data, will continue to fuel debate. Job displacement within creative industries remains a significant anxiety. Technical limitations in maintaining consistency over very long durations and precisely controlling specific elements within generated videos still exist. The high computational costs associated with generating high-quality AI video also limit accessibility. Ultimately, the industry will need to strike a delicate balance between technological advancement and responsible AI governance, demanding robust ethical guidelines and effective regulatory frameworks.

Experts foresee a "ChatGPT for creativity" moment, signaling a new era for creative expression through AI. The launch of Sora's social app is viewed as the beginning of an "AI video social media war" with competing platforms emerging. Within the next 18 months, creating 3-5 minute videos with coherent plots from detailed prompts is expected to become feasible. The AI video market is projected to become a multi-billion-dollar industry by 2030, with significant economic impacts and the emergence of new career opportunities in areas like prompt engineering and AI content curation.

A New Horizon: Concluding Thoughts on Sora 2's Impact

OpenAI Sora 2 is not merely an incremental update; it is a declaration of a new era in video creation. Its official launch on September 30, 2025, marks a pivotal moment in AI history, pushing the boundaries of what is possible in generating realistic, controllable video and synchronized audio. The model's ability to simulate the physical world with unprecedented accuracy, combined with its intuitive social app, signifies a transformative shift in how digital content is conceived, produced, and consumed.

The key takeaways from Sora 2's arrival are clear: the democratization of high-quality video production, the intensification of competition among AI powerhouses, and the unveiling of a new paradigm for AI-driven social interaction. Its significance in AI history is comparable to major breakthroughs in language models, solidifying OpenAI's position at the forefront of multimodal generative AI.

The long-term impact will be profound, reshaping creative industries, marketing, and advertising, while also posing critical societal challenges. The potential for misinformation and job displacement demands proactive and thoughtful engagement from policymakers, developers, and the public alike. However, the underlying ambition to build AI models that deeply understand the physical world through "world simulation technology" positions Sora 2 as a foundational step toward more generalized and intelligent AI systems.

In the coming weeks and months, watch for the expansion of Sora 2's availability to more regions and platforms, particularly the anticipated API access for developers. The evolution of content on the Sora app, the effectiveness of OpenAI's safety guardrails, and the responses from rival AI companies will be crucial indicators of the technology's trajectory. Furthermore, the ongoing ethical and legal debates surrounding copyright, deepfakes, and socioeconomic impacts will shape the regulatory landscape for this powerful new technology. Sora 2 promises immense creative potential, but its responsible development and deployment will be paramount to harnessing its benefits sustainably and ethically.

This content is intended for informational purposes only and represents analysis of current AI developments.

TokenRing AI delivers enterprise-grade solutions for multi-agent AI workflow orchestration, AI-powered development tools, and seamless remote collaboration platforms. For more information, visit https://www.tokenring.ai/.